Virtual Production for the next generation of filmmakers

Located in the heart of Universal Studios, Florida, DAVE School develops the skills of budding Visual Effects, Production, and Game Design professionals. It draws on the experience of its award-winning alumni as well as the latest developments in production technology to give students a head-start once they enter industry.

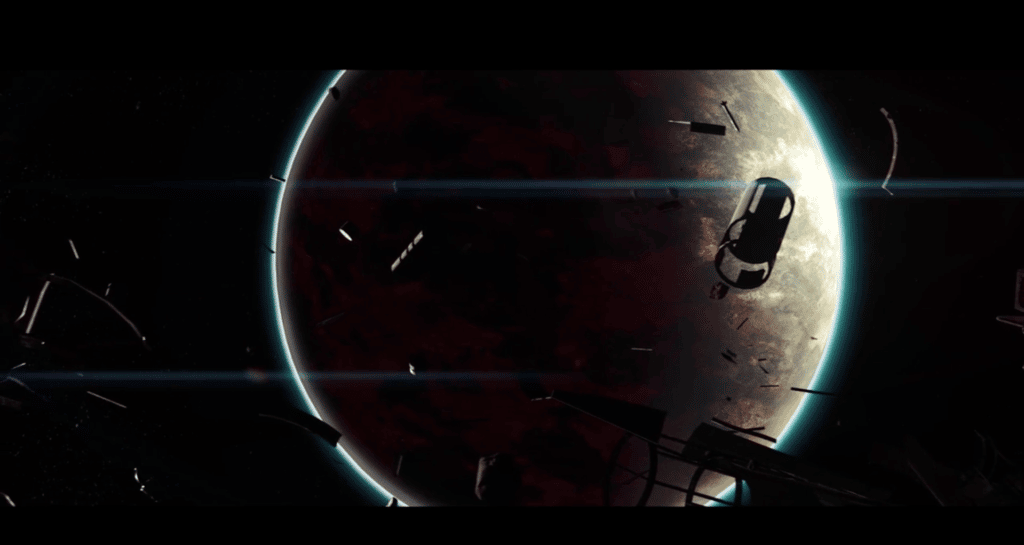

A recent project put together by Dave School and On-Set Facilities, saw students from the Game and VFX programmes collaborate to produce a sci-fi-inspired movie trailer, titled Deception. Shot exclusively with the Mo-SysVP workflow, the 2-minute trailer depicts a high-energy intergalactic battle scene in which the protagonists are ultimately stranded on a deserted, barren planet.

Realtime virtual production

DAVE School students employed real-time virtual production methods in the making of Deception in order to reduce time spent in post-production, and improve the overall quality of the trailer. The entire studio set-up and Mo-Sys VP system was put together by virtual production specialists On-Set Facilities and Mo-Sys' own virtual production engineer, Marcus Masdammer.

Image Credit: On-Set Facilities

Image Credit: On-Set Facilities

Real-time virtual production relies on various technologies to give filmmakers the ability to shoot and edit virtual or augmented reality scenes whilst on location. Effects, graphics, and animations can all be created before shooting takes place, meaning that producers and actors can actually visualise a near-finished scene during filming and as soon as they’ve completed it on set. This doesn’t only give a greater level of creative freedom to all involved, but reduces production time and costs as post-production editing is minimised.

Among the technologies required for real-time virtual production are camera tracking and graphics plug-ins.

Virtual Production – Unreal Engine

The graphics for DAVE School’s Deception trailer were rendered in Unreal Engine (UE). As one of the world’s most advanced graphics engines, UE is regarded as the gold-standard across the film and gaming industry.

Image Credit: DAVE School

Image Credit: DAVE School

When used in films, however, UE traditionally only enters the picture during post-production; many virtual studios use lower quality, proprietary graphics systems during filming before adding UE later on.

Mo-Sys VP helps productions to cut out this later editing phase by acting as a direct interface between camera tracking systems and UE. With this technology, producers are able to integrate the power of Unreal Engine into their realtime VR shots, gaining a better impression of the final product and again, saving time and money.

In the case of DAVE School’s production of Deception, Mo-Sys VP was paired with our own StarTracker system. Using retro-reflective ‘stars’ attached to the studio ceiling and camera-mounted sensors, StarTracker allows cameras to calculate their absolute position in a computer-generated environment. Syncing seamlessly with Mo-Sys VP, StarTracker further enables real-time production techniques and reduces the resources spent on the post-production phase.

Next-gen virtual film production

DAVE School is dedicated to providing its students with the best preparation to enter the film industry. Whilst drawing on the expertise of its veteran tutors, it also integrates the latest production technologies into its curricula. Nowhere has this been more evident than in the making of Deception. Relying on StarTracker and Mo-Sys VP, students learnt that real-time virtual production not only provides producers with unparalleled creative freedom whilst on set, but also significantly reduces costs and time spent in post-production.

Mo-Sys is leading the way in camera tracking and virtual production techniques. If your organisation is looking to integrate VR production into its curricula, or you’d like to explore the potential of this technology more generally, please get in touch with our team.