Mo-Sys Develops Cinematic XR Production for LED Stages

Mo-Sys Engineering has developed a new multi-node media server system, VP Pro XR, supporting XR production in LED volumes for final pixel film and TV footage. Aiming to overcome restrictions and compromises that producers encounter on XR stages, the system supports the use of traditional shooting techniques for virtual productions.

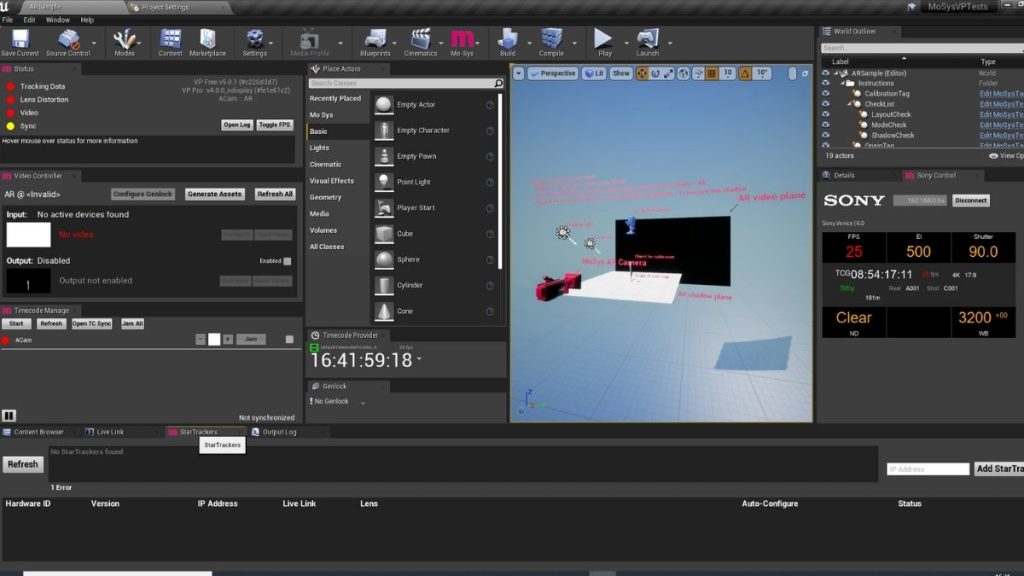

Mo-Sys VP Pro XR is a hardware and software system combining multi-node nDisplay architecture, updated Mo-Sys VP Pro real-time compositor/synchronizer software, and a new set of XR tools. nDisplay architecture helps users manage very large-scale immersive displays such as projection domes or arrays of LED screens, where real-time scenes are needed at high resolutions, requiring live, synchronous content to refresh at high frame rates. Designed with a minimal system delay of about 6 to 7 frames, it successfully captures XR sequences that require live action talent to interact with mocap AR avatars.

Purpose-Built Cinematic XR

The XR space until now has been largely driven by live event equipment companies. Although XR volumes help save costs by minimizing post-production compositing and lowering location costs, they have also introduced shooting limitations, and an output image that some find isn’t yet comparable to non-real-time compositing. This challenge motivated the development of VP Pro XR.

VP Pro XR is the first product release under Mo-Sys’ Cinematic XR initiative. The real aim of Cinematic XR is to move final pixel XR production forwards, in terms of image quality and shooting creativity, from its initial roots using live event LED set-ups to purpose-built Cinematic XR. Mo-Sys says final pixel recording – creating finished quality imagery in-camera through live, LED wall virtual production – is achievable but needs further development.

VP Pro XR extends the original Mo-Sys VP Pro software, which is a real-time compositor, synchronizer and keyer that integrates directly into the Unreal Engine Editor interface, with tools made to simplify virtual production workflows. It works by recording real-time camera and lens tracking, lens focus and zoom plus camera setting data for ARRI Alexa and Sony Venice cameras from Mo-Sys tracking hardware like StarTracker, to create virtual production content.

Mo-Sys has identified four key components of Cinematic XR. These are to improve image fidelity, and to introduce established cinematic shooting techniques to XR. They also support interaction between virtual and real set elements, and the development of new hybrid workflows combining virtual and non-real-time compositing.

Michael Geissler, Mo-Sys CEO said, “Producers love the final pixel XR concept, but cinematographers are concerned about image quality, colour pipeline, mixed lighting and shooting freedom. We started Cinematic XR in response to this challenge, and VP Pro XR is specifically designed to solve problems that our cinematographer and focus puller colleagues have brought our attention.”

Cinematic XR Focus

Delivering cinematic capabilities and standards for cinematographers and focus pullers, the XR media server system is launching just after the recent Mo-Sys Cinematic XR Focus capability was introduced in April. Cinematic XR Focus allows you to pull focus between real and virtual elements in an LED volume and is now available on VP Pro XR.

Pulling focus is one of the creative limitations of using an LED volume for virtual production. Cinematographers have been prevented from pulling focus between real foreground objects and virtual objects displayed on an LED wall, such as cars and buildings, because the lens focal plane stops at the LED wall, keeping the background out of focus.

Now, focus pullers using Cinematic XR Focus with the same wireless lens control system they are accustomed to, can pull focus from real objects through the LED wall to focus on virtual objects that appear to be positioned behind the LED wall. The reverse focus pull is also possible.

Cinematic XR Focus is an option for Mo-Sys’ VP Pro software working with the Mo-Sys StarTracker camera tracking. Cinematic XR Focus synchronizes the lens controller with the output of the Unreal Engine graphics, and thus depends on StarTracker to constantly track the distance between the camera and the LED wall.

Read the full article on the Digital Media World website here >