The Making of The Weather Channel and Future Group's AR Broadcasts

The Weather Channel have continued to push the visual boundaries of broadcasting by using mixed-reality and dramatic informational graphics to elevate their extreme weather reporting. To do so, they partnered with The Future Group and Mo-Sys utilizing StarTracker to enable real-time immersive storytelling.

Mike Chesterfield, Director of The Weather Channel's Presentations said: “Utilizing The Future Group's Frontier powered by Unreal, combined with the dynamic power of the Mo-Sys camera tracking system, has already allowed us to take the limits off of how we tell weather stories. Having the ability to create hyper-realistic environments through Unreal enables the viewer to easily imagine themselves in just about any weather situation we are trying to portray.”

We caught up with Justin LaBroad, Senior Software Engineer from The Future Group at SIGGRAPH this year, where he gave an interesting talk detailing what software and tools were needed to create the mixed-reality graphics. Unfortunately, the video we recorded of the presentation is not of excellent quality, consequently we have also . transcribed it with screenshots below.

The Future Group Demonstration at SIGGRAPH 2018

Presented by Justin LaBroad, Senior Software Engineer at The Future Group.

Recorded on 12th August 2018.

“In order to do live AR for broadcast we had to create our own version of the Unreal Engine that we’re calling Frontier. Frontier gives us broadcast video input and output of the engine itself, we do this with AJA Corvid 88 or an AJA Kona card. We also need to implement camera telemetry into the Unreal Engine. For the camera telemetry we use the Mo-Sys StarTracker, which uses small reflective dots that are stuck to the ceiling of the studio. The StarTracker sensor picks up all these dots so we know exactly where the camera is in the studio, at all times.”

“We also implement an internal composition plane with colour grading and total mapping into Unreal Engine, allowing us to move the lighting and the actual talent themselves that are in the virtual environment, so it matches the real world. Our external keyer is using Blackmagic Ultimate and we’ve also spec’ed out two silver Jeff’s supercomputing pc’s with an NVIDIA Titan V, we use 128gb RAM for the EXR sequence of the tornado itself. We also implement an internal AR compositing where we take the broadcast video from the camera and overlay it with augmented reality items on top of it.”

3D scan of studio 9 at The Weather Channel

3D scan of studio 9 at The Weather Channel

“One of the first things we did for this was we had to scan the entire Weather Channel studio 9, which we needed for the destruction shots and the aftermath transitions you saw. We took a lighter scan of the actual studio itself and used the point cloud as reference for rebuilding 3D geometry. Our textures were based on referenced photography that were projected into substance in [Foundry’s] Mari and we used 2k+ textures of all our objects. One of the main positives for using a virtual studio is we could check the camera and lens calibration and know that the AR items are going to line up with the actual items in the studio itself.”

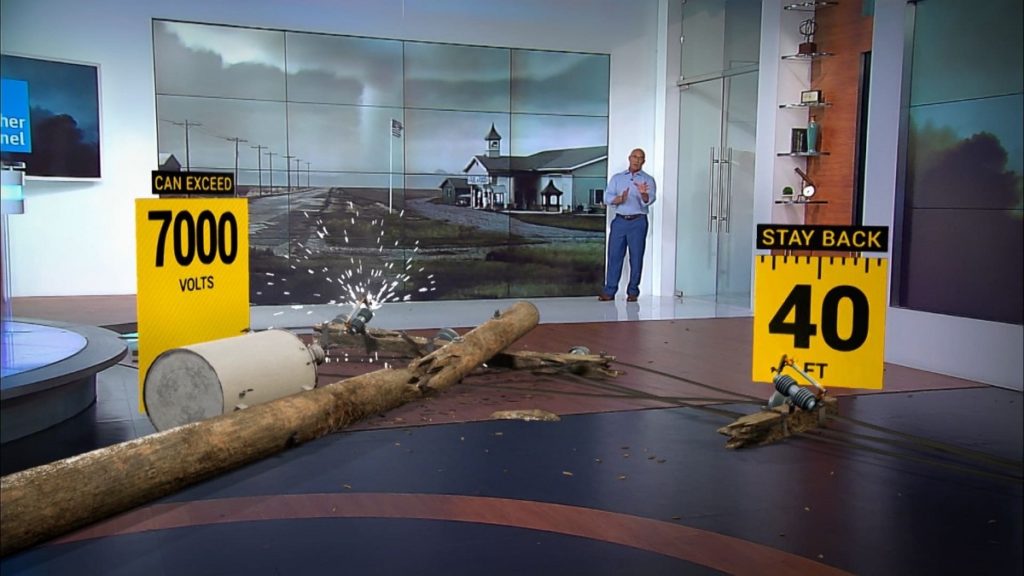

Detailed AR objects overlaid, with shadows cast on all surfaces.

Detailed AR objects overlaid, with shadows cast on all surfaces.

“Adding to this, we were able to create subtle effects that integrated the AR items a little better inside of the studio. For instance, as the telephone pole is falling into the studio we were able to cast shadows on all the different surfaces of the walls and the floor in real-time. In some cases we actually just replaced the real wall in the studio with a completely virtual wall for the two-by four construction, so as it smashes into the side of the studio, we can actually have the vases fall of the wall and the crash onto the floor.”

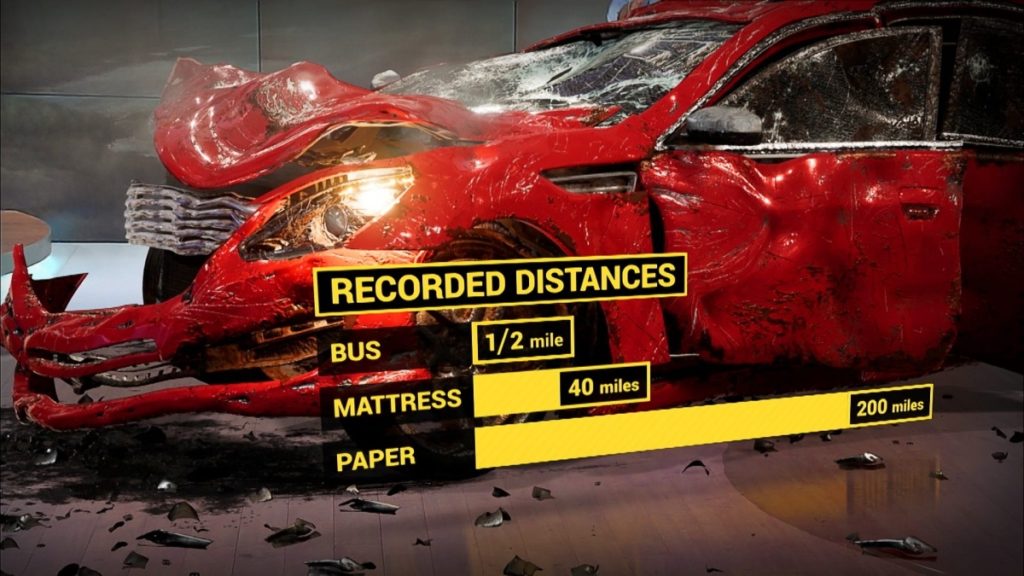

2K textured car with additional infographics

2K textured car with additional infographics

“One thing that is a little bit different in a broadcast environment while there is a certain script and a certain flow for the segments that they’re going to create is the camera operator will make decisions on the fly they might work things out and change things so when we’re building a virtual set we have to make sure that when they zoom in anywhere inside the set there is full resolution, we used 2k textures everywhere to do this. If you zoom on that little shelf in the corner you can see all the books in full resolution. We could afford to count for this because the scope is relatively limited we’re not going to run of texture memory because we’re just using a certain area of a studio, we’re not doing real large environments.”

Jim Cantore immersed in the aftermath of the tornado

Jim Cantore immersed in the aftermath of the tornado

“The Weather Channel wanted to do their monitor as a window looking out into the world. So as the camera moved they wanted to have depth and be able to see outside the window itself. We didn’t use a greenscreen for the monitor because we wanted Jim Cantore to be able react to everything that was happening as it happened. We used a off-axis camera projection inside Unreal, so that what’s being rendered on the monitor is being rendered from where the camera is and tracked that through the Mo-Sys StarTracker system. This means as the camera is moving around the room and the person standing in the room, it doesn’t look strange. To do this, we had to modify Unreal Engine’s camera projection through a plugin to track that perspective.”

“We also immersed the talent with some practical effects, so as the talent is in the virtual environment we want them to be able to reflect on virtual items themselves such as the puddles and the aftermath but also the other way round we wanted the AR items to affect the talent in the studio too. We were able to hide physical objects behind AR elements inside the studio to cast a shadow on Jim Cantore’s head as he walks underneath the fake house, giving it a little bit more immersion.”